Driving Licence to Kill

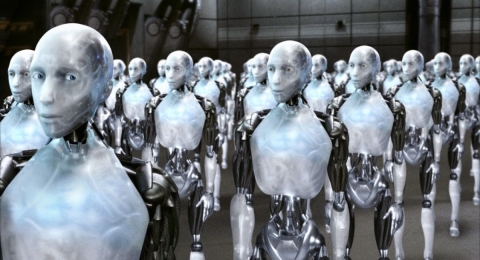

We’re living through exciting times where we can see the development and uptake of autonomous cars, something which generations before could only imagine in sci-fi films. These futuristic vehicles promise an array of benefits, from greater freedom and independence for those who cannot normally drive, to easing the monotonous commute to work. Most recently, Tesla have even announced an ‘Uber-like’ service for their cars, whereby the vehicle can collect the owner when required, whilst acting as an autonomous taxi during the day to earn the owner money when they are not using their car.

Nevertheless, it’s important that issues associated with autonomous cars are explored and tackled before they escalate as these vehicles become increasingly more prevalent in society. After the first reported fatality caused by an autonomous car crash, we are drawing closer to a time when we need to seriously consider the question of how these vehicles, rather than the driver, will deal with life-or-death situations.

Put simply, how will autonomous cars react to situations in which others are in danger? A common way of exploring this issue is through the Trolley Problem. The basis of the trolley problem is this; five people are tied to a train track with a train heading towards them, and you have the option of pulling a level so that the train will switch to a different set of tracks. However, there is one person on the other track. So what do you do – do nothing, and the train kills five people, or pull the lever and the train will kill one person.

This is a difficult ethical problem to answer, but one in which approximately 90% of respondents choose to kill the one to save the five. But you can start to see where the issue arises with autonomous vehicles. When designing the software within autonomous vehicles, car-makers will have to consider this problem. This could even include situations that put the driver or passengers at risk of harm to save others.

So you may wonder what the problem is – surely car makers should just programme the cars to cause minimal loss of life? Well, consider this – would you want to buy a car that would choose to kill you if the situation arose? It is confounding variables such as these that make this problem so difficult to solve, all of which car manufacturers, insurance firms and policy makers will have to consider, and the solution they choose will no doubt be subject to controversy and debate.

Whatever the solution, it will have to stem from engagement with the wider public. Large surveys, as well as other academic research, would give us a better insight into how this issue should be addressed. Nevertheless, it raises ethical issues not previously considered in vehicles before, and the resulting legislation will pave the way for the future of smart and autonomous technology.

Fundamentally, it will lead to a new, ‘artificial’, morality.

Comments